In preparation for my presentation at the DOAG Database with Cloud Infrastructure (#DOAGDB26) conference, I built a small Data Guard test environment and created the standby database using Oracle Enterprise Manager (OEM).

After creating a physical standby database via Oracle Enterprise Manager and registering it with Oracle Restart, srvctl commands failed with:

PRCD-1024 : Failed to retrieve instance list for database DEMODB_BARDG02

PRCR-1055 : Cluster membership check failed for node bardg02The setup of my environment was done as follows:

- Created a virtual machine named bardg01

- Installed Oracle Enterprise Linux 9.4

- Copied the gold images for:

Oracle Grid Infrastructure 19.28

Oracle Database 19.28 - Unzipped both into their respective directories

- Installed the required

oracle-database-preinstall-*packages - Configured the

griduser

After that, I cloned the VM, adjusted the network configuration, and two identical systems were available:

bardg01bardg02

Grid Infrastructure and Database Installation

bardg01

On bardg01, I installed Oracle Grid Infrastructure for Standalone Server (Oracle Restart) without ASM.

I performed a software‑only installation and configured Oracle Restart afterwards.

The installation completed without issues.

After that, I installed the RDBMS software and created a database using DBCA.

Everything worked as expected.

bardg02

On bardg02, I repeated the same Grid Infrastructure and RDBMS installation steps.

At this stage, I did not create a database.

Creating the Standby Database with OEM

After installing the OEM agents on both hosts, I created a physical standby database via OEM.

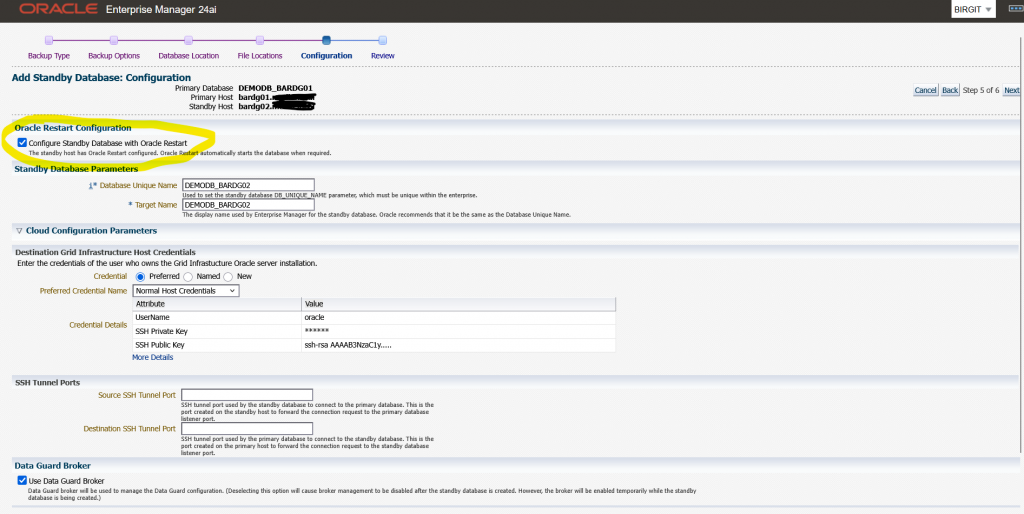

During the wizard I selected the option to “Configure Standby Database with Oracle Restart”

See the image below:

The OEM job finished successfully.

However, after reviewing the environment, I noticed that:

- The standby database was registered with Oracle Restart

- The database itself was running, but was shown as offline

grid@bardg02 ~> crsctl stat res -t

--------------------------------------------------------------------------------

Name Target State Server State details

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE bardg02 STABLE

ora.ons

OFFLINE OFFLINE bardg02 STABLE

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.cssd

1 OFFLINE OFFLINE STABLE

ora.demodb_bardg02.db

1 OFFLINE OFFLINE STABLE

ora.diskmon

1 OFFLINE OFFLINE STABLE

ora.evmd

1 ONLINE ONLINE bardg02 STABLE

--------------------------------------------------------------------------------At this point, I simply wanted to check the status of the standby database.

oracle@bardg02 ~> . oraenv

ORACLE_SID = [DEMODB] ? DEMODB

The Oracle base remains unchanged with value /u01/app/oracle

oracle@bardg02 ~> srvctl status database -db demodb_bardg02

PRCD-1024 : Failed to retrieve instance list for database DEMODB_BARDG02

PRCR-1055 : Cluster membership check failed for node bardg02In my environment, every srvctl command on this host resulted in the same error combination.

On my primary host bardg01 every srvctl command was successful.

At this point, I verified a few things:

- The Grid Infrastructure installation had completed without errors

- I had not manually changed the CSSD configuration

- On both hosts,

cssdwas OFFLINE

I searched online and in MOS and came to the conclusion that this error pair is typically triggered by cluster membership checks performed by srvctl, even in Oracle Restart setups without ASM.

Starting CSSD

My next test was to start CSSD, and I configured it to start automatically after reboot.

grid@bardg02 ~> crsctl modify resource ora.cssd -attr "AUTO_START=always" -unsupported

grid@bardg02 ~> crsctl start res ora.cssd -unsupported

grid@bardg02 ~> crsctl stop has

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'bardg02'

CRS-2673: Attempting to stop 'ora.LISTENER.lsnr' on 'bardg02'

CRS-2677: Stop of 'ora.LISTENER.lsnr' on 'bardg02' succeeded

CRS-2673: Attempting to stop 'ora.evmd' on 'bardg02'

CRS-2677: Stop of 'ora.evmd' on 'bardg02' succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'bardg02' has completed

CRS-4133: Oracle High Availability Services has been stopped.

grid@bardg02 ~> crsctl start has

CRS-4123: Oracle High Availability Services has been started.

grid@bardg02 ~> crsctl stat res -t

--------------------------------------------------------------------------------

Name Target State Server State details

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE bardg02 STABLE

ora.ons

OFFLINE OFFLINE bardg02 STABLE

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.cssd

1 ONLINE ONLINE bardg02 STABLE

ora.demodb_bardg02.db

1 ONLINE INTERMEDIATE bardg02 Mounted (Closed),HOM

E=/u01/app/oracle/pr

oduct/19.28/db_home1

,STABLE

ora.diskmon

1 OFFLINE OFFLINE STABLE

ora.evmd

1 ONLINE ONLINE bardg02 STABLE

--------------------------------------------------------------------------------After that I retried the srvctl command:

oracle@bardg02 ~> srvctl status database -db DEMODB_BARDG02

Database is running.Attribute HOSTING_MEMBERS

To better understand the behaviour, I ran some additional tests with the database resource attribute HOSTING_MEMBERS.

grid@bardg02 ~> crsctl modify resource "ora.demodb_bardg02.db" -attr "HOSTING_MEMBERS=" -unsupported

grid@bardg02 ~> crsctl stat res ora.demodb_bardg02.db -f |grep HOSTING_

HOSTING_MEMBERS=All srvctl commands ran successfully

oracle@bardg02 ~> srvctl status database -db DEMODB_BARDG02

Database is running.

oracle@bardg02 ~> srvctl stop database -db DEMODB_BARDG02

oracle@bardg02 ~> srvctl status database -db DEMODB_BARDG02

Database is not running.

oracle@bardg02 ~> srvctl start database -db DEMODB_BARDG02

oracle@bardg02 ~> srvctl status database -db DEMODB_BARDG02

Database is running.With this configuration the database could be managed even when cssd was OFFLINE.

Next test:

- HOSTING_MEMBERS=bardg02

- cssd = OFFLINE

All srvctl commands failed again with:

PRCD-1024 : Failed to retrieve instance list for database demodb_bardg02

PRCR-1055 : Cluster membership check failed for node bardg02Next test:

- HOSTING_MEMBERS=bardg02

- cssd = ONLINE

All srvctl commands ran successfully

Conclusion

Based on my tests, I can conclude the following:

- After installing Grid Infrastructure for a standalone server,

cssdmay initially be OFFLINE - Databases created with DBCA work without issues

- Databases added later (for example via OEM Data Guard) can fail with PRCD‑1024 / PRCR‑1055 if

cssdis not running - When

HOSTING_MEMBERSis used,srvctlclearly relies oncssd, even in Oracle Restart setups without ASM.

Recommendation

From my perspective, there are two possible options:

- Start and enable CSSD

- Ensure

cssdstarts automatically after reboot - This is the recommended and clean solution

- Ensure

- Remove HOSTING_MEMBERS from the database resource

- This works technically

- However, it relies on unsupported configuration changes

My recommendation:

After installing Oracle Grid Infrastructure for a standalone server, always verify that CSSD is online and configured to start automatically.

Hope that helps!